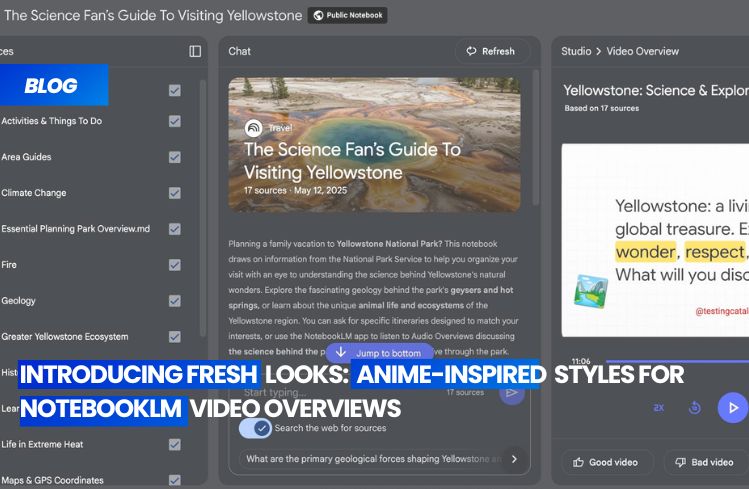

Google’s research assistant tool, NotebookLM, is set to undergo a significant transformation with an upcoming update to its video overviews feature. This move aligns with Google’s broader strategy to infuse its AI-driven productivity tools with creative, media-rich outputs. Currently, users are limited to a single video overview format, “Explainer,” which provides a structured summary connecting various information sources within a project. However, early builds hint at an impending change with the introduction of a format selector menu, suggesting a shift similar to that seen in audio overviews, where users now have the liberty to choose between styles to suit their preferences or use case.

The evolution of video overviews in NotebookLM isn’t confined to formats alone. Google is also introducing a suite of new visual styles, moving away from the current single, classic template. Leaked style options include “Whiteboard,” “Watercolour,” “Risographic,” “Heritage,” “Papercraft,” and “Anime.” These styles offer detailed and vibrant visuals, far surpassing the current standard. This push towards personalization and creativity across Google’s AI tools could make NotebookLM video summaries more appealing and accessible to a wider range of users, from educators and marketers to researchers seeking shareable media content.

These updates could also signal backend improvements, potentially tied to an upgrade of the Nano Banana model or the anticipated Veo 3.1 release. The goal? Richer, higher-quality output. While no official timeline has been provided, these features could be unveiled soon, possibly coinciding with Google’s upcoming wave of product launches. Moreover, the addition of an auto-select function, designed to intelligently choose the best visual style for a given summary, would further lower barriers for users and facilitate wider adoption within educational and collaborative environments.

In essence, these updates strengthen NotebookLM’s position in Google’s product lineup, transforming it into a more versatile knowledge management platform. It will be better equipped to serve both individual and team workflows, fostering a more dynamic and engaging user experience. As Google continues to push the boundaries of AI integration, NotebookLM’s multimedia evolution is a testament to the company’s commitment to making its tools more intuitive, personalized, and creatively expressive.